AMD Highlights Growing Role of CPUs in the Era of Agentic AI

As agentic AI systems become more common in modern data centers, CPUs are gaining renewed importance in managing and coordinating complex AI workflows. According to AMD, while GPUs remain critical for high-performance AI processing, CPUs play a vital role in orchestrating the entire AI infrastructure.

Agentic AI refers to intelligent systems capable of planning, making decisions, and executing tasks autonomously. This new generation of AI relies on powerful GPUs for computation but requires high-performance CPUs to manage data flow, scheduling, memory, and overall system operations.

CPUs and GPUs Working Together

In modern AI clusters, CPUs act as the central coordinator, handling tasks such as data preparation, workload scheduling, memory management, and system control, while GPUs focus on intensive parallel processing for AI training and inference.

During AI training, GPUs handle most of the heavy computation while CPUs manage system resources and supply data efficiently. As AI workloads shift toward inference and real-time decision making, CPUs take on a larger role in processing results, coordinating multi-step workflows, and managing communication between applications and AI models.

AMD EPYC Driving Balanced AI Infrastructure

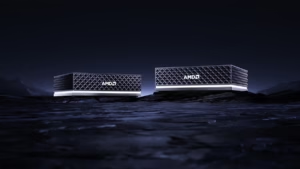

AMD highlighted its AMD EPYC processors as a key foundation for modern AI data centers. These CPUs are designed to work alongside AMD Instinct GPUs, AMD Pensando technologies, and the AMD ROCm stack to create balanced AI infrastructure.

According to AMD’s internal estimates, systems powered by 5th Gen AMD EPYC CPUs can deliver up to 2.1× higher performance per core and up to 2.26× better performance per watt compared with systems based on the Nvidia Grace Superchip.

Preparing for Next-Generation AI Workloads

Looking ahead, AMD is developing its next-generation EPYC processors, codenamed AMD EPYC Venice, which will power the upcoming AMD Helios platform.

As AI adoption continues to grow, AMD believes balanced computing architectures—combining CPUs, GPUs, networking, and open software ecosystems—will be essential for delivering scalable, energy-efficient AI performance in future data centers.